- What Is a Multimodal Data Labeling Workforce?

- Why Multimodal AI Requires Specialized Annotation Teams

- Core Components of an Effective Multimodal Data Labeling Workforce

- Challenges in Managing a Multimodal Data Labeling Workforce

- Industries Driving Demand for Multimodal Annotation Workforces

- Best Practices for Building a High-Performing Multimodal Data Labeling Workforce

- The Role of AI-Assisted Annotation in Workforce Optimization

- Why Businesses Are Investing in Dedicated Multimodal Annotation Teams

- How GetAnnotator Supports Multimodal Data Labeling Workflows

- Preparing Your AI for the Real World

- FAQs

Multimodal Data Labeling Workforce: The Foundation of Advanced AI Training

Artificial intelligence is rapidly evolving past single data formats. A few years ago, an AI model might have only analyzed text or recognized images. Now, cutting-edge systems process the world much like humans do—combining sight, sound, and language to make complex decisions.

This shift has given rise to multimodal AI systems that simultaneously digest image, video, audio, LiDAR, text, sensor, and conversational data. These sophisticated models hold immense potential, from powering self-driving cars to diagnosing complex medical conditions. However, training these models requires massive amounts of accurately labeled data across multiple formats.

High-quality annotation now depends on specialized human teams capable of interpreting overlapping data streams. A multimodal data labeling workforce bridges the gap between raw data and intelligent algorithms. As projects grow in scope, managing these diverse teams becomes a significant logistical challenge.

Scalable annotation platforms like GetAnnotator provide the infrastructure needed to manage complex workforce operations seamlessly. By keeping tools, workflows, and quality assurance in one place, they ensure these specialized teams can operate at peak efficiency.

What Is a Multimodal Data Labeling Workforce?

A multimodal data labeling workforce is a structured team of human annotators, quality assurance specialists, and project managers dedicated to classifying overlapping data types.

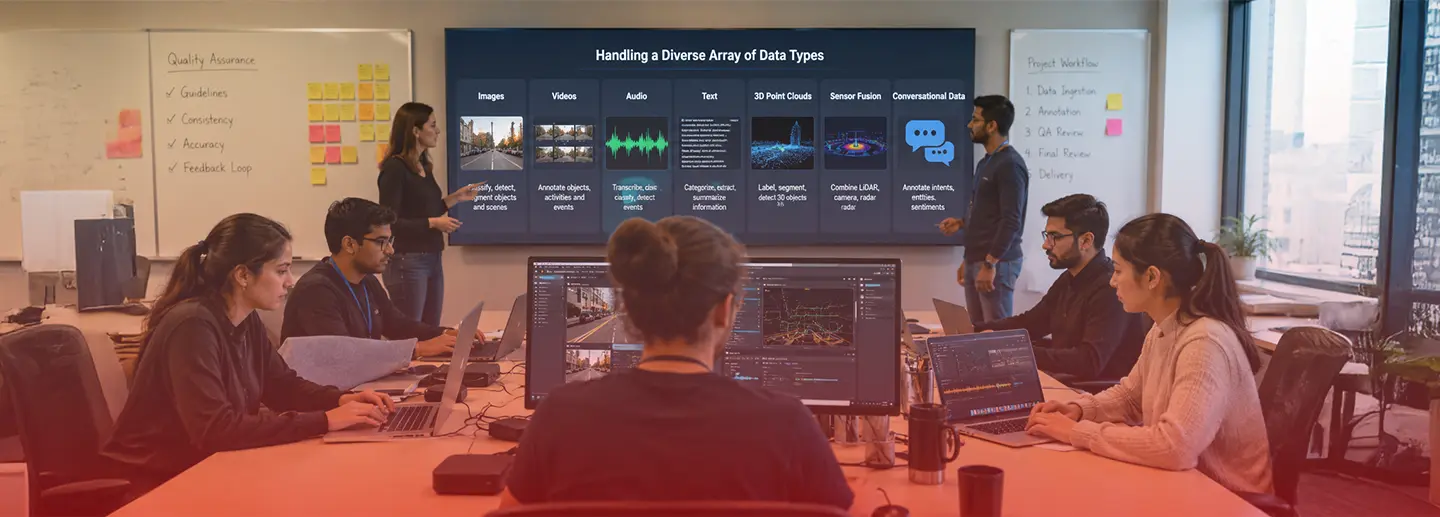

Unlike traditional annotation teams that focus on a single medium—like tagging photos of cats—multimodal teams manage intricate, interconnected datasets. They handle a diverse array of data, including:

- Images

- Videos

- Audio

- Text

- 3D point clouds

- Sensor fusion data

- Multilingual conversational data

Real-world examples of this work are everywhere. In autonomous vehicles, teams label street signs, radar data, and 3D LiDAR scans simultaneously. Healthcare AI relies on annotators mapping clinical text to medical imaging. Robotics, retail analytics, smart surveillance, and generative AI systems all depend on this multi-layered approach to understand context and environment.

Why Multimodal AI Requires Specialized Annotation Teams

Single annotators often cannot handle multiple data formats effectively. The mental shift required to jump from transcribing audio to identifying spatial coordinates in a 3D point cloud is significant. Different modalities require entirely different areas of expertise.

| Data Type | Workforce Skill Needed |

| Video Annotation | Temporal tracking expertise |

| Audio Labeling | Linguistic and acoustic understanding |

| LiDAR Annotation | 3D spatial awareness |

| NLP Annotation | Language and domain expertise |

| Sensor Fusion | Cross-modal validation skills |

AI model training has experienced increased complexity. Developing reliable models requires synchronized labeling across all these modalities. If an autonomous vehicle’s camera data does not align perfectly with its LiDAR data, the AI could make catastrophic errors. Consistency and rigorous quality control are absolutely essential.

Core Components of an Effective Multimodal Data Labeling Workforce

Building an operation capable of feeding advanced AI models requires a strategic approach to human resources and tooling.

Skilled Human Annotators

You need a domain-trained workforce with industry specialization. A medical AI project requires annotators with healthcare backgrounds, just as global NLP models require language-specific teams.

Workforce Scalability

Data needs fluctuate. Organizations must have the ability to ramp teams quickly, handling enterprise-scale datasets without losing momentum. Effective distributed workforce management ensures projects stay on track across different time zones.

Quality Assurance Layers

Accurate AI requires strict oversight. Multi-review systems, consensus validation, and gold-standard benchmarking guarantee that labels remain precise across millions of data points.

Project Managers & QA Leads

Managers handle workflow coordination, productivity monitoring, and escalation handling. They act as the glue keeping distributed annotators aligned with project goals.

Annotation Platform Infrastructure

Collaborative tooling, workflow automation, and real-time progress tracking bring everything together. GetAnnotator supports workforce collaboration and project scalability, giving managers the visibility needed to guide complex operations.

Challenges in Managing a Multimodal Data Labeling Workforce

Scaling these operations introduces a unique set of hurdles.

Cross-Modal Consistency

Maintaining alignment between video, audio, and text labels is difficult. An error in one modality can corrupt the holistic understanding of the AI model.

Workforce Training Complexity

Different training requirements for different modalities slow down onboarding. Teaching someone to label bounding boxes takes hours; teaching them sensor fusion validation takes weeks.

Annotation Fatigue

Long-form video and audio projects demand high concentration. Over time, this focus wanes, reducing overall efficiency and accuracy.

Quality Drift at Scale

Maintaining annotation standards across large, distributed teams requires constant vigilance. Without proper systems, individual interpretations can skew the dataset.

Tool Fragmentation

Using multiple disconnected tools slows workflows and frustrates annotators. Centralized annotation platforms improve operational efficiency by keeping all modalities, guidelines, and QA tools under one roof.

Industries Driving Demand for Multimodal Annotation Workforces

Several major sectors rely heavily on diverse data annotation to push technological boundaries.

Autonomous Vehicles

Self-driving technology requires synchronized camera, LiDAR, and radar annotation to safely navigate roads.

Robotics & Embodied AI

Teaching machines to interact with physical spaces involves human motion tracking, egocentric video labeling, and sensor synchronization.

Healthcare AI

Modern diagnostic tools combine medical imaging, voice notes, and clinical text annotation to provide comprehensive patient insights.

Retail & Smart Surveillance

Stores optimize layouts and security through customer activity tracking, video analytics, and behavioral annotation.

Conversational AI

Virtual assistants need audio transcription, intent labeling, and emotion recognition to interact naturally with human users.

Best Practices for Building a High-Performing Multimodal Data Labeling Workforce

Success in data annotation depends on process optimization and clear communication.

Standardized Annotation Guidelines

Provide clear standard operating procedures (SOPs) and comprehensive edge-case handling documentation. Annotators should never have to guess how to label an anomaly.

Modality-Specific Training

Implement specialized onboarding programs. Tailor the training entirely to the specific data format the annotator will handle.

Continuous QA Monitoring

Regularly evaluate performance using accuracy scoring, random audits, and constant feedback loops.

Workforce Segmentation

Assign tasks based on expertise. Keep linguistic experts on text and audio, and spatial experts on LiDAR and video.

Scalable Annotation Infrastructure

Leverage cloud-based collaboration and integrated workflow management. Platforms like GetAnnotator help businesses manage large annotation teams efficiently by streamlining communication and task distribution.

The Role of AI-Assisted Annotation in Workforce Optimization

Automation is changing how human teams operate. Through human-in-the-loop annotation, pre-labeling automation, and model-assisted workflows, teams experience significantly faster turnaround times.

However, AI-assisted labeling still requires skilled human validation. Algorithms can draft the initial bounding boxes or transcriptions, but humans must verify the edge cases and correct subtle errors that machines miss.

Why Businesses Are Investing in Dedicated Multimodal Annotation Teams

Enterprises are prioritizing dedicated labeling teams to secure a competitive advantage. Clean, accurate data leads to faster AI deployment and better model accuracy. It reduces training bias and vastly improves edge-case handling.

Ultimately, data quality directly impacts AI performance. Scalability for enterprise AI systems is impossible without a reliable, specialized workforce fueling the algorithms.

How GetAnnotator Supports Multimodal Data Labeling Workflows

Managing complex data formats requires purpose-built infrastructure. GetAnnotator offers robust platform capabilities designed for modern AI development.

With multi-format annotation support, teams can process video, text, and 3D data in a single environment. The platform includes powerful workforce collaboration tools and comprehensive QA management workflows. Users benefit from scalable project handling, custom workflow configuration, and enterprise-ready annotation infrastructure.

Looking to scale your multimodal data labeling workforce? GetAnnotator helps teams manage complex AI annotation workflows with speed, quality, and collaboration.

Preparing Your AI for the Real World

Multimodal AI is increasing annotation complexity across every major industry. As models become more sophisticated, skilled annotation workforces are critical for AI success. Scalability, quality assurance, and workflow coordination are the pillars of a strong data pipeline. Centralized platforms help enterprises manage modern annotation operations efficiently, ensuring models perform accurately in the real world.

FAQs

Ans: – It is a specialized team of annotators, QA leads, and managers trained to label diverse, overlapping data formats like video, audio, text, and LiDAR simultaneously.

Ans: – Modern AI models need to process multiple data streams to understand real-world contexts accurately, requiring synchronized and precisely labeled data across all formats.

Ans: – Key industries include autonomous vehicles, healthcare AI, robotics, retail surveillance, and conversational AI development.

Ans: – Common challenges include maintaining cross-modal consistency, handling complex training requirements, fighting annotation fatigue, and preventing quality drift at scale.

Ans: – Model-assisted workflows use pre-labeling automation to handle initial annotations, allowing human workers to focus on faster validation and edge-case correction.

Ans: – Platforms should feature multi-format support, collaborative tooling, automated QA workflows, and scalable project management infrastructure.

Ans: – GetAnnotator provides centralized, enterprise-ready infrastructure featuring multi-format support, robust QA tools, and workflow automation to manage large, complex annotation teams effectively.

Related Blogs

May 7, 2026

Finding Most Cost-Effective Healthcare Data Labeling Solutions

Artificial intelligence is rapidly transforming the medical field. From advanced diagnostics and radiology to electronic health record (EHR) automation and drug discovery, AI models are reshaping patient care. However, the success of these models relies entirely on the quality of the information feeding them. High-quality healthcare AI data is essential for model accuracy and patient […]

Read More

May 7, 2026

How to Hire a Computer Vision Annotation Expert

Building an effective artificial intelligence model requires massive amounts of high-quality data. In the realm of computer vision, data annotation bridges the gap between raw images and machine learning. By labeling images and videos accurately, annotators teach algorithms how to see, understand, and interact with the physical world. Finding reliable, scalable, and highly accurate talent […]

Read More

May 2, 2026

Why Hire Dedicated Data Annotators Over Platforms?

Struggling with inconsistent annotations, missed deadlines, or poor-quality datasets? You are not alone. As machine learning models become more advanced, the demand for highly accurate training data has skyrocketed. AI success depends heavily on the quality of the data feeding it. If you feed a model poorly labeled data, you will get poor predictions. When […]

Read More

April 25, 2026

How to Hire Linguistics Freelancers for AI Data

Artificial intelligence models rely on massive amounts of high-quality language data to function properly. Whether you are building natural language processing (NLP) algorithms, speech recognition tools, or complex multilingual models, accurate data annotation is essential. However, simply labeling text or audio is no longer enough to train advanced AI. Linguistics expertise matters because human language […]

Read More

Previous Blog

Previous Blog